WEBSITE IS CURRENTLY UNDER DEVELOPMENT

Jascha Grübel ✉ is an Assistant Professor for Data Science Systems @ Wageningen University

/ Digital Twinning

/ Experimentation

/ XR Visualisation

/ Mobility & Transport

/ Cognition

I am an Assistant Professor for Data Science Systems at Wageningen University & Research at the Laboratory of Geo-information Science and Remote Sensing. Previously, I was a postdoctoral researcher at the Center for Sustainable Future Mobility and the Geoinformation Engineering Group at ETH Zürich leading the project a Digital Twin for the Swiss Mobility System. I am the architect of the Open Digital Twin Platform (ODTP) and the lead-developer of Spatial Performance Assessment for Cognitive Evaluation (SPACE).

I am working on a wide range of topics involving human motion at all scales. As a spatial data scientist, I bring together Computer Science, Cognitive Science and Spatial Sciences.

Locomotion

Navigation

Crowd dynamics

Individual mobility

Transport

Say Hello to ODTP, the digital twin building tool of the future.

/ Be your own data science builder

Create data sources intuitively

Rely on data semantics

Work with many open-source analysis tools

Do more with your data

XR Research

Fused Twins

Blog

I don’t always post blog entries, but when I do, you find them here:

-

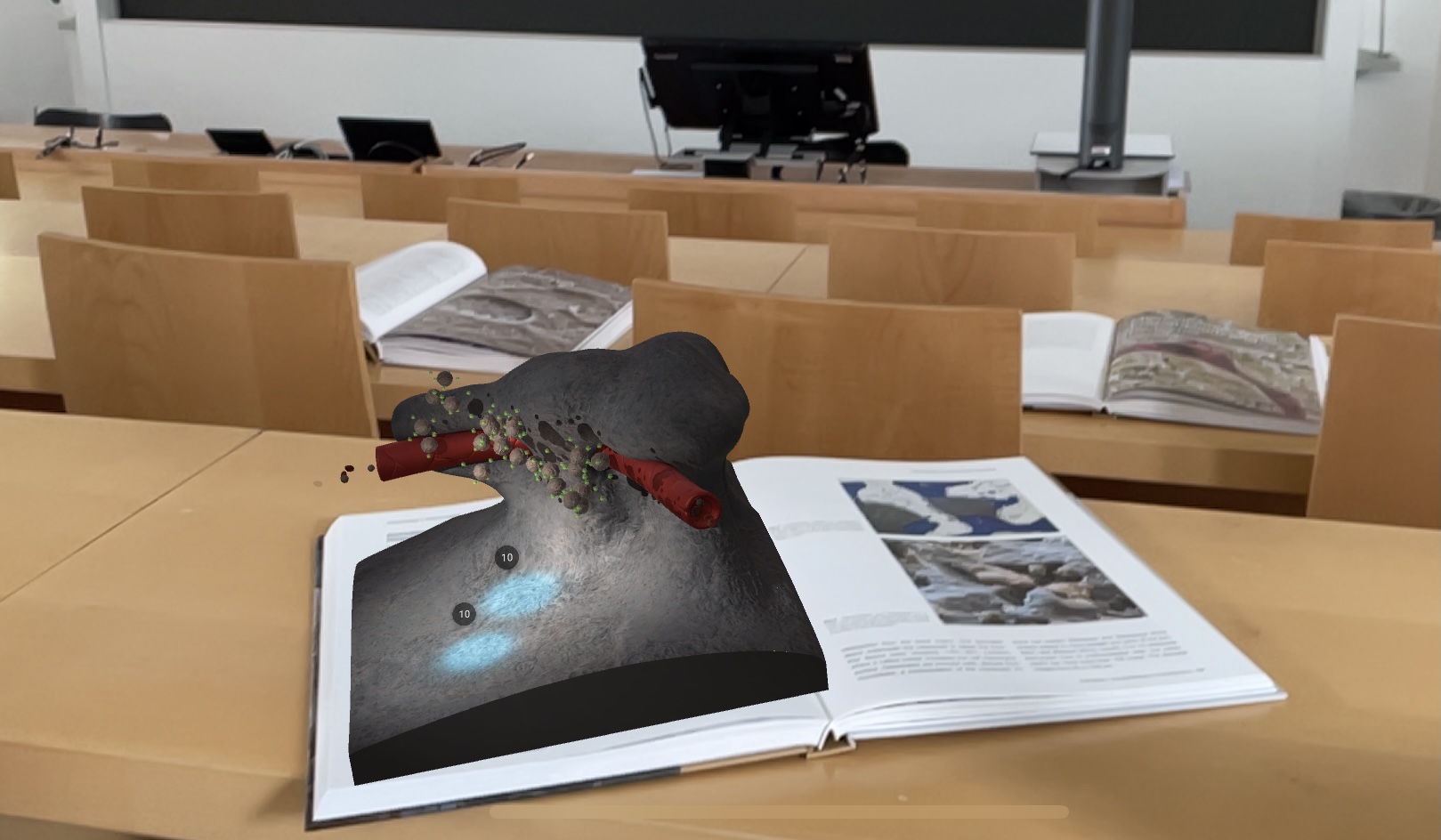

Paper on Game AR Osteoclasts

I ended up as an academic but this week I am happy to announce my newest paper as a first author in JMIR Publications Serious Games on a learning game I created together with the ETH Game Technology Center, University of Zurich, and Medical University of Vienna with support from Quintessence Publishing Deutschland. Our Game…

-

Project start for Open Digital Twin Platform

Last year during my first steps as a postdoc I applied to the institutional Swiss Open Research Data Grants. For my work at the Center for Sustainable Future Mobility (CSFM) at ETH Zürich, I gained the support of Prof. Kay W. Axhausen and Prof. Martin Raubal to submit a project together with the Swiss Data…

-

So you want to build your Unity project on a private jenkins for iOS and Android?

This is a question that recently haunted me. Previously, at the Game Technology Center (GTC) at ETH Zürich, I was privileged enough to sit on a deployed pipeline. It creaks sometimes but in general, it works well. For a new project with Future Health Technologies at the Singapore ETH Center, there was no such luck.…

My Projects

My current main research projects are related to the Open Digital Twin Platform (ODTP). You can also find my work on cognition under SPACE.

Systems & Platforms

/ ODTP

/ SPACE

/ EVE

/ ExaC

Building Research

/ Occupancy

/ Office perception

/ Wayfinding

Serious Games

/ VR Osteoclasts

/ AR Osteoclasts

/ SPACE

Let’s Connect & Research

| Homepage: http://jascha.gruebel.io ORCID: 0000-0002-6428-4685 Google Scholar: Dox0S8IAAAAJ Researchgate: Jascha-Gruebel LinkedIn: jascha-gruebel Twitter: jascha_gruebel DBLP: 208/8403 WoS Researcher ID: AAX-4975-2020 Scopus ID: 57195806789 OSF: beqxa |